Overview

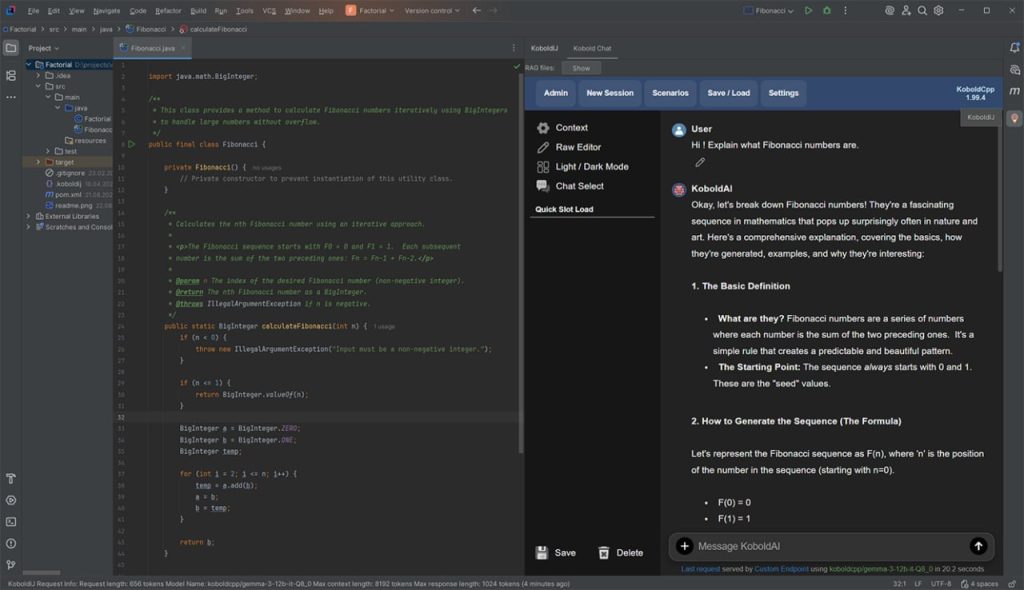

The KoboldIJ plugin for IntelliJ IDEA provides seamless integration with a self-hosted Large Language Model (LLM) powered by the KoboldAI (Koboldcpp) env. This allows developers to make fast, secure, and free LLM requests directly from their development environment without relying on external APIs or public LLM rules. You can leverage any KoboldcAI (Koboldcpp) compatible public model (GGUF), including models fine-tuned to your specific needs using the LoRA (Low-Rank Adaptation) technology.

KoboldAI works across all major operating systems, requiring minimal setup. Just download the model and start using it to enhance your coding experience with local LLMs.

Key Features

- Simple Setup.

- Easily configure the plugin and connect to Koboldcpp without complex installations.

- Execution of typical tasks.

- The main purpose is the intelligent execution of typical tasks.

- Compatibility.

- Koboldcpp works with a variety of popular operating systems, allowing you to download models and use them immediately.

- Local & Secure.

- By using a locally hosted LLM, you have full control over your data, ensuring security and independence from external services.

- Integration with commercial LLM.

- You can use commercial LLM (Qwen)

- Image generation.

- You are not limited to working with code, the plugin will allow you to generate images based on text description or other image.

- Image recognition.

- You can recognize images by getting text or descriptions from them, and also use images as context for queries in LLM

- Context Length.

- The length of the context is limited only by the model you are using.

Plugin Workflow

- In-Code Prompting.

- Write a request directly in your code, and the plugin will replace it with the LLM’s response.

- Advanced Queries.

- For more complex requests, the second mode (available via the menu) opens a dedicated LLM query window.

- Reusable Queries.

- The third mode allows you to save and reuse your LLM queries for future use.

- Interactive Chat Mode.

- The fourth mode offers a dialog-style interaction for fine-tuning queries, similar to a traditional chatbot.

Getting Started

- Download the free Koboldcpp launcher.

- GitHub project home page

- Direct link to distributive (v 1.86)

- Get one or more LLM models from Hugging Face

- List of supported models

- Direct link to gemma-3-4B

- Get one or more VLM models from Hugging Face

- Get one or more Vision models from Hugging Face

- Install the KoboldIJ plugin

- Run Koboldcpp launcher with LLM model

- Get Prompts from public repository Cursor

Run/Configure KoboldCpp

- Run koboldcpp.exe (for win)

- Open Quick Launch tab and set LLM model (mistral2.2-7B)

- Open Image Gen tab and set VLM model (SDXL Lightning)

- Start processing requests – push launch button

All-in-one single-click Starter Pack!

If you’re too lazy and just want a template to try out everything in it, this comes with Gemma3-4B (text & vision), DeliberateV2 (image gen), arctic-snowflake-m (embedding model), OuteTTS (text-to-speech), and Whisper-Base (voice recognition). Right click and save the .kcppt file to your PC and open it in KoboldCpp. Recommended for 12gb GPUs and up. Feel free to edit and remove excess components.

More configurations : https://huggingface.co/koboldcpp/kcppt/tree/main

Download

You can install the KonoldIJ plugin from the IntellijIdea marketplace

Video tutorial & Screen Shots

Default prompt’s

Used LLM: Codestral-22B

Prompt structure: Each LLM request consists of 2 components

| Parameter name | Description |

|---|---|

| memory (part 1) | This is a sequence of text that will always be injected into the start of each prompt sent to the AI. Example: Java code requirements. For example: You are an expert in Java programming, Maven, JUnit, and related Java technologies |

| prompt (part 2) | Prompt text entered by the user |

Links:

- https://dev.to/techiesdiary/chatgpt-prompts-for-code-review-and-debugging-48j

- ChatGPT Prompts for Developers: Code Explanation

- https://www.learnprompt.org/chat-gpt-prompts-for-coding/

| Type of Prompt | Prompt |

|---|---|

| Memory | You are an expert in Java programming, Spring Boot, Spring Framework, Maven, JUnit, and related Java technologies. Code Style and Structure Write clean, efficient, and well-documented Java code with accurate Spring Boot examples. Use Spring Boot best practices and conventions throughout your code. Implement RESTful API design patterns when creating web services. Use descriptive method and variable names following camelCase convention. Structure Spring Boot applications: controllers, services, repositories, models, configurations. |

| Explain the code | Explain the Java code You are tasked with analyzing and explaining the provided Java code snippet. Your explanation should cover the purpose of the code, its functionality, and any important concepts or techniques used. Instructions: 1. Read the provided Java code carefully. 2. Write a detailed explanation of what the code does, step by step. 3. Describe any variables, functions, or logic used in the code and their roles. 4. Identify any important concepts, algorithms, or techniques employed. 5. Discuss the efficiency, readability, and potential improvements of the code. 6. Provide examples or scenarios where the code might be useful. Code Snippet: ${context.source} |

| Code Review | Code Review the Java class Please review this Java code. Can you spot any potential problems with this code ? Code Snippet: ${context.source} |

| Improve the code | Suggest improvements to optimize this Java method Code Snippet: ${context.source} |

| Write JavaDoc | Write only a JavaDoc comment for a method in the JavaDoc description standard and nothing more. This style will be precise, clear, and objective, focusing on conveying technical or specialized information in an accessible way. Code snippet: ${context.source} |

| Fix Bugs | Here is a Java method, it contains an some bugs, to find it you need to determine the purpose of the method and rewrite it without bugs. Code snippet: ${context.source} |

| Improve Prompt | Given the user’s initial prompt${req_txt}enhance it. Instructions: – Start with clear, precise instructions placed at the beginning of the prompt. – Include specific details about the desired context, outcome, length, format, and style. – Provide examples of the desired output format, if possible. – Use appropriate leading words or phrases to guide the desired output, especially if code generation is involved. – Avoid any vague or imprecise language. – Rather than only stating what not to do, provide guidance on what should be done instead. – Remember to ensure the revised prompt remains true to the user’s original intent |

With everything in place, you’ll be ready to supercharge your development workflow. Using our plugin, you can boost your productivity to match that of an entire team of developers!

Versions of plugin

| Feature | Community | Pro | Enterprise |

|---|---|---|---|

| Working with typical prompts | + | + | + |

| Template support | + | + | + |

| Prompt tuning | + | + | + |

| Integration with prompt repository | – | + | + |

| Image processing Generation and Recognition | – / + | + | + |

| Integration with commercial LLM | – / + (only Qwen) | + | + |

| Assistant mode: pair programming, architect mode, complex prompts. | – | – | + |

| Custom extensions points | – | – | + |

| Connecting to the Sandbox: Team Lead Mode | – | – | + |

| Price | Free | sales@artenatech.com | sales@artenatech.com |

Use Cases

Using our solution, developers get the opportunity to integrate the self-hosted LLM directly into their favorite Intellij Idea development environment. Our plugin allows you to significantly speed up routine tasks, improve code quality, and help prepare for professional challenges.

1. Help in preparing code for pull requests

Using LLM will improve the readability and quality of the code before committing changes to the main branch of the project.

- Analyze the written code and automatically detect possible errors and stylistic inconsistencies;

- Suggest improvements for formatting and compliance with code style;

- Generate commit descriptions and help in creating pull requests, ensuring clarity of changes in the code for other team members.

2. Performing routine operations

LLM in the development environment allows you to automate numerous repetitive operations, such as:

- Creating code templates and generating standard fragments required in the project;

- Generate documentation for new functions and classes based on their code and purpose;

- Automatically fix minor errors like typos or unused variables.

3. Analyze other developer’s code

LLM is great for analyzing code written by other developers, which is important when working on new projects or joining a team. With the model, you can:

- Quickly get a brief description of functions and their relationships;

- Identify potential vulnerabilities or errors;

- Study the code architecture and identify key dependencies;

- Understand which changes may affect other parts of the system.

4. Migrate legacy projects

Migrating from legacy projects to modern standards often takes time and a deep understanding of the old code base. A plugin with LLM support simplifies this process:

- Offers recommendations on updating deprecated functions;

- Suggests how to replace deprecated APIs or libraries with modern equivalents;

- Performs compatibility analysis and possible conflicts when implementing changes.

5. Interview Task Analysis

For those preparing for technical interviews, the model can be a great coach. It can:

- Solve interview tasks and offer optimized solutions;

- Explain solutions and approaches, making them clearer;

- Support the learning process by offering additional tasks similar to the one being solved, to consolidate skills.

6. Help with Hackathon

In the limited time at hackathon, it is important to quickly find solutions and work as productively as possible. The LLM plugin in the IDE can:

- Generate code for typical tasks;

- Help with code optimization and performance;

- Identify potential problems and offer solutions, allowing the team to focus on the main idea of the project.

7. Interview Preparation

LLM can be a valuable assistant in interview preparation:

- The model can simulate the real interview process, offering questions and tasks;

- Analyze answers and provide feedback for improvement;

- Provide materials and topics for self-study, preparation for questions and topics that may arise during an interview.

Conclusion

Integrating a self-hosted LLM into a development environment opens up many opportunities to improve efficiency and quality of work. From performing routine tasks to helping prepare for professional challenges, our plugin is becoming an indispensable tool for modern developers.